Unlocking the Potential of Small AI Models in Deep Reasoning

Artificial intelligence (AI) continues its rapid evolution, pushing the boundaries of what machines can understand and achieve. Recent studies indicate that small AI models may hold significant promise for complex reasoning tasks, historically viewed as the domain of their larger counterparts. This transformative insight could reshape our approach to AI development and utilization.

Examining the Advantages of Small AI

Historically, the narrative has been clear: bigger models are better. However, emerging data suggests that smaller models, equipped with the right training methods and frameworks, can outperform expectations, particularly in deep reasoning scenarios. Researchers have identified that these smaller models, typically ranging from 1.5 billion to 7 billion parameters, can achieve remarkable accuracies on tasks like mathematical competitions—often ranking them within the top 20% of competitors.

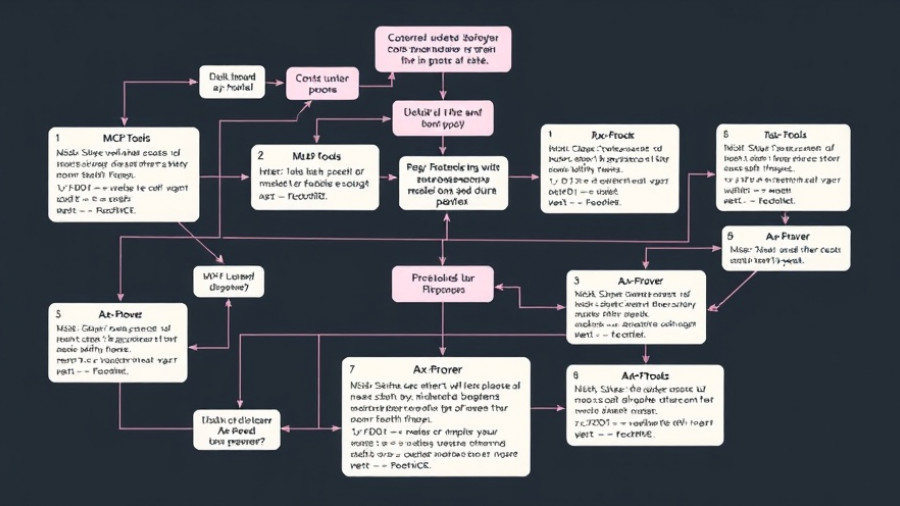

One such breakthrough is the rStar-Math method. This approach uses a Monte Carlo Tree Search (MCTS) technique, enabling the decomposition of complex problems into manageable parts while fostering a structured reasoning process. Such strategies not only mitigate the limitations often seen with smaller models but enhance their logical reasoning abilities.

Comparison with Traditional Larger Models

While larger models possess vast amounts of information, they still often succumb to challenges in reasoning and comprehension, especially in intricate scenarios that require logical deductions. Instead of providing exploratory and contextual responses, large AI systems primarily lean toward quick, pattern-based outputs. This division underscores the importance of nurturing skills like continuous learning and adaptable reasoning, skills where smaller models may excel.

Research from Microsoft has introduced several approaches that aim to bolster both small and large language models. This includes refining structural designs, integrating mathematical reasoning techniques, and advancing generalization capabilities across various disciplines. The goal is clear: it is crucial to build AI that can perform tasks with precision and reliability, particularly in high-stakes fields like healthcare and scientific research.

Future Predictions for AI Deep Reasoning

As we progress, the potential for small AI models to dominate the reasoning arena is promising. Their ability to emulate human-like thinking processes—including problem decomposition and flexible strategy adjustment—positions them as agile tools capable of addressing diverse challenges. By embracing new methodologies like Logic-RL, which focuses on structured learning from logical puzzles, these models are set to evolve further, reinforcing their place in the AI landscape.

Counterarguments and Challenges

Despite the advantages, skepticism remains about the practical applications of small AI models. Critics argue that the specific context of application plays a crucial role in determining success. For instance, larger models, while slower, may still hold an edge in situations demanding depth over breadth. Hence, the AI community is compelled to strike a balance between exploring smaller models' capabilities while acknowledging their potential limitations.

Actionable Insights for AI Enthusiasts

For individuals and organizations looking to leverage AI, understanding small models' potential is crucial. By embracing these models, entities can enhance user experience, improve operational efficiency, and reduce computational costs. Utilizing frameworks like rStar-Math could also empower developers to drive AI projects towards a more feasible and innovative future.

The Role of Education and Continuous Learning

As small AI models pave the way to more efficient reasoning, there lies an essential role for education in AI and machine learning. Industry experts and academics must collaborate to create curriculum pathways that encompass emerging technologies and the implications of AI reasoning. By equipping new generations with necessary skills, we ensure that AI continues to progress in a reliable and responsible manner.

Conclusion: The Next Steps in AI Reasoning

The findings related to small AI models and their reasoning capabilities prompt a re-evaluation of the potential that rests with emerging technologies. Addressing the limitations faced by both small and large models, researchers are actively pioneering methodologies to strengthen AI’s reasoning prowess. For those eagerly following AI development, the conclusion is clear—staying informed and adaptable is not just advantageous; it’s essential as we navigate this rapidly changing landscape.

Write A Comment